Mark Zuckerberg today announced Faceboook was rolling out enhanced AI to scan posts for signs of suicidal thoughts. The new system, which doesn’t rely on users to flag or report content, is set to rollout worldwide with the exception of countries in the EU, due to restrictions.

The social network’s new algorithms use pattern recognition to search posts and comments for key words. If the AI recognizes cause for concern, such as commenters asking the original poster if they’re okay or if they need help, it’ll send the information to a Facebook employee for review.

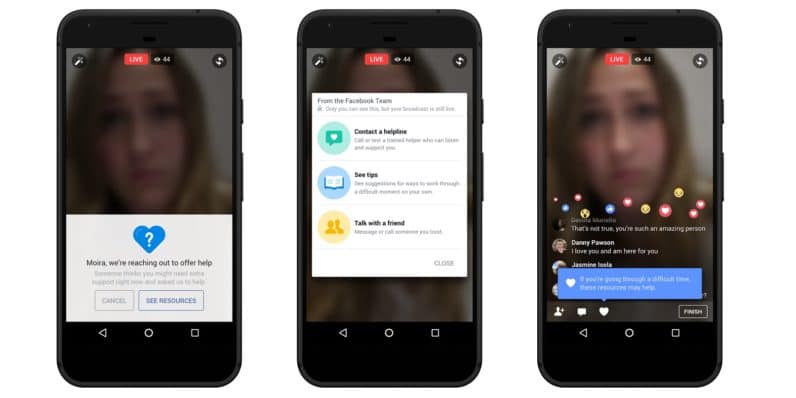

If the person reviewing the report determines intervention is necessary, they have the option to alert first-responders who can then contact the individual or reach out through a person-at-risk’s family and friends. These employees, presumably, are part of Zuckerberg’s initiative to add 3,000 dedicated workers for the purpose of reviewing content.

The CEO in a post earlier this year said “If we’re going to build a safe community, we need to respond quickly.” He also pointed out the company was working on building a faster method for finding and reporting suicidal behavior on the platform.

While details about the changes to the algorithms currently remain scarce, it’s resulted in over a 100 interventions between first-responders and potentially suicidal individucals, according to Zuckerberg’s post. And if even a single life was saved, the engineers who built the AI should be commended.

Suicide is the second leading cause of death for Americans under the age of 44, and responsible for nearly 50,000 lives lost every year in the US. Facebook’s AI may not fix the problem, but it’s another tool in the fight.